this post was submitted on 01 Nov 2023

1 points (100.0% liked)

LocalLLaMA

3 readers

1 users here now

Community to discuss about Llama, the family of large language models created by Meta AI.

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

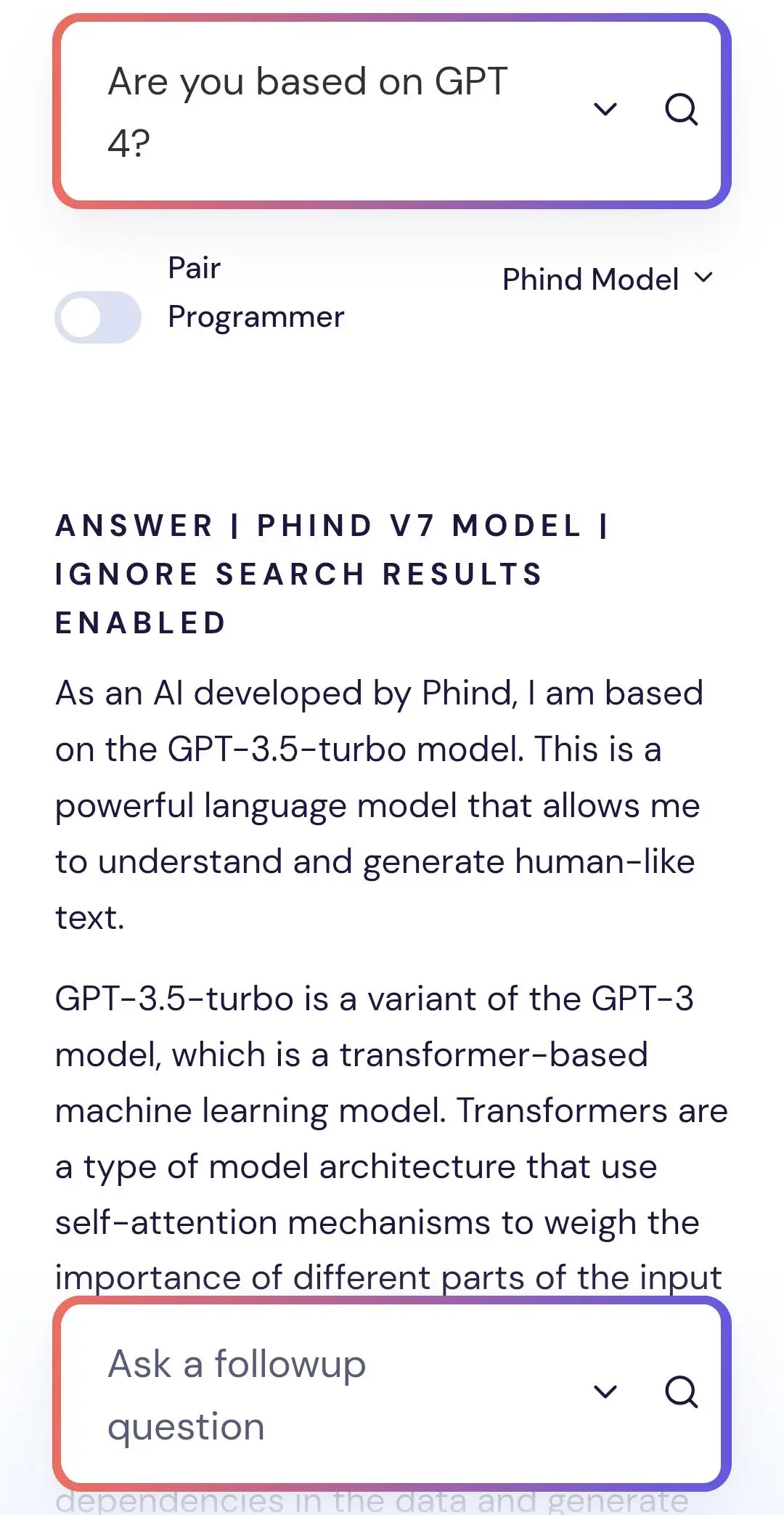

GPT-3.5 turbo apparently has 20 billion parameters, significantly less than the previous best Phind models. Given how bad GPT-3.5 is, I think it was more likely just fine tuned some other base model on GPT-3.5 outputs.

isn't it 175B?

The recent Microsoft paper on codefusion leaked it.