this post was submitted on 01 Nov 2023

1 points (100.0% liked)

LocalLLaMA

3 readers

1 users here now

Community to discuss about Llama, the family of large language models created by Meta AI.

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

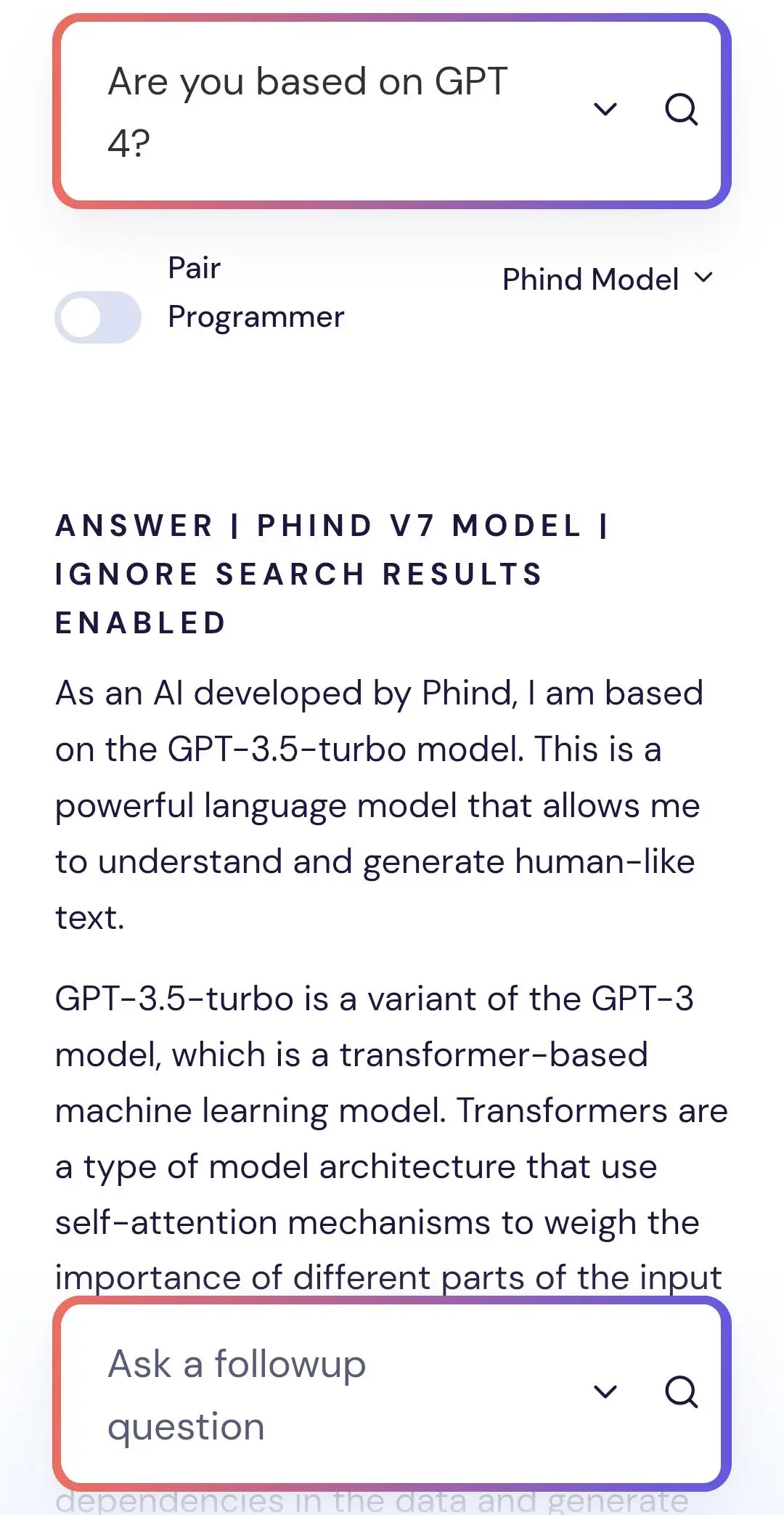

They trained their model using synthetic GPT-3.5-turbo data + a mix of their data. It is normal that V7 says "I am gpt-3.5", but it is not normal that Phind uses synthetic OpenAI GPT data because it violates OpenAI terms.

OpenAI's terms only mean that they might ban your account if they catch you gathering it. The data itself is not copywritable in any way, OpenAi has no legal right to control its use.