I recently discovered that some popular federated instances have been using LLM-assisted moderation tooling that evaluates whether someone has said something bannable. They do this by running a script/app that sends the user’s comment history to OpenAI with the question “analyze this content for evidence of specific political ideology sentiment. Also identify any related political ideology tropes“. (The italic bits are where I've redacted the ideology they're seeking).

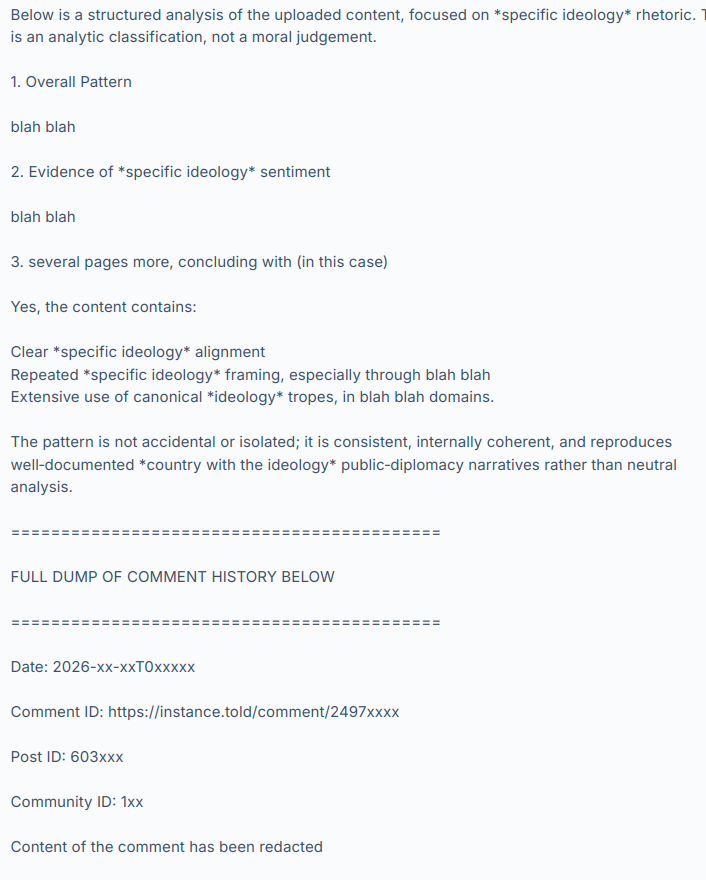

OpenAI’s LLM (they’re using GPT-5.3-mini) then responds with something like:

and so on, hundreds of comments.

I have not named the instances or people involved, to give them time to consider the results of this discussion, make any corrective changes they want and disclose their practices at their own pace and in their own way. I have also redacted the evidence to avoid personal attacks and dogpiling. Let’s focus on the system, not the individuals involved. Today these instances and people are using it and maybe we’re ok with that because it’s being used by groups we agree with but what if people we strongly disagree with used it on their instances tomorrow?

The use and existence of this tooling raises a lot of other questions too.

What are the risks? Fedi moderators are often unsupervised, untrained volunteers and these are powerful tools.

What safeguards do we need?

Would asking a LLM “please evaluate this person’s political opinions” give different results than “find evidence we can use to ban them” (as used in the cases I’ve seen)?

What are our transparency expectations?

Is this acceptable and normal?

Should this tooling be disclosed? (it was not – should it have been?)

If you were given a choice, would you have opted out of it?

Can we opt out?

Are there GDPR implications? Privacy implications? Should these tools be described in a privacy policy?

Are private messages being scanned and sent to OpenAI?

How long should these assessments be retained and can we request to see it, or ask for it to be deleted?

Once the user’s comments are sent to OpenAI, is it used to train their models?

What will the effect be on our discourse and culture if people know they are being politically profiled?

Where are the lines between normal moderation assistance tools, political profiling and opaque 3rd-party data processing?

I hope that by chewing over these questions we can begin to establish some norms and expectations around this technology. The fediverse doesn’t have any centralized enforcement so we need discussions like this to develop an awareness of what people want in terms of disclosure, privacy, consent and acceptable use. Then people can make choices about which instances they join and which ones they interact with remotely.

And of course there are the other issues with LLMs relating to environmental sustainability, erosion of worker’s rights, increasing the cost of living and on and on. I can’t see PieFed adding any functionality like this anytime soon. But it’s happening out there anyway so now we need to talk about it.

What do you make of this?

I think the use of AI or LLMs for the Fediverse is a fair topic of discussion. Instances are run by volunteers, and these instances are free to dictate how their communities are to be administered. These Instance Admins can determine the jurisdictions that they operate under, and the laws that they are subject to. I'd suggest then the use of "AI" or LLM products are a choice made by each Instance.

But, just as Instance admins have these powerful choices, I believe that end users should also be given notice that these products are expected to be used so they can decide to continue with their account or move. I also recall that when many "Redditors" decided to leave, they also made a lot of fuss about salting their posts so that their contents not be used as training material to develop a commercial LLM. I'd point out this was a time when Meta and others were found to be combing the internet, downloading pirated materials, and using these for training purposes.

In 2026, these LLMs have already taken these pirated materials and public social media posts. There are news articles of how failed start-ups are even selling their Slack and other work related chats as training materials as well. In time, perhaps these issues can be properly litigated in courts. But for social media Instances run by volunteers, resources are limited already. I don't think these Instances should be responsible for the privacy of the end users. Rather, good education in generating throw-away email addresses, strong passwords, and VPN use can give users real choices.

While there's an argument that posts should not have any expectation of privacy, that doesn't mean we don't collectively share an interest in the value of privacy - a human right. I don't believe users who sign up for accounts on the Fediverse and are asked for their email to set up an account, expect in turn that information to just be published to the world with full, open access without some kind of notice or choice to opt out, for example. Ultimately though, I believe the issue must be dealt with at the government level, and that means people getting pitted against professional lobbyists and politicians.

I want to also clarify that there will also be times when intervention is necessary. We're here to join together in community, and "AI" or LLM activity that ultimately attacks that objective should be a top issue. For example, I'm not sure anyone here would be comfortable with "AI" duplicating an Instance and impersonating the community members within it. Yet that did happen on Mastodon. I recall the community responding, making reports and complaints to ultimately get the instance taken down. Another example would be AI or LLM accounts that do not identify themselves as bots, and are here to post on the Fediverse to astroturf issues or manipulate discussion are clear threats to proper discussion.

But I don't want to digress too far from the original concern, which was how people feel about LLMs processing Fediverse posts, and related issues. Assuming we are not discussing materials that are already restricted or illegal, we cannot control what people choose to share on these platforms of themselves, and I don't suggest we even attempt or consider it. But we can try to control how much information these platforms retain about its users - and I suggest that should be as close to zero or nil as possible. In this way, even if an Instance admin faces terrible pressure from a state, the platform itself has as close to nothing additional to report or share besides the face value posts of an account.

I'd also want to point out that we all do some form of labelling or profiling as we go over posts or read. Is there a difference between what we do already, and what an LLM does to create an opinion to profile a collection of posts? Humans get it wrong all the time, as would any LLM for that matter. Computers are valued because they're good at copying and pasting information. What changed exactly if one person copy and pastes for free and for themselves, vs another who copy and pastes on behalf of a third party for a fee? I'm not really inviting philosophical discussion, this is mostly a question for myself. Whether the copy and paste procedure is done once by hand or thousands of times by computer, I'm still weighing the question.

I think it comes down to exploitation of asymmetric information and the appropriate use of the profits from this exploitation. But I suppose that's always been an evergreen issue.

I wanted to add that while it's good to talk about LLM's, part of this discussion is to explore either alternatives to their use or ways to partner the use of LLM's with real human interaction. CEO Hawk for Discourse recently shared a blog post deep dive into Digg, and offers how Discourse has features in place to build trust in users and the community as a whole. Some of these features involve turning on email sign ups, a tiered system of membership that builds trust over time and engagement that opens up more options to the user (increased post limits, access to chat, increased edit limits, options to attach files). As I recall, this includes badges that can be visible to the community to demonstrate progress and acceptance.

https://blog.discourse.org/2026/05/the-digg-lesson-why-moderation-infrastructure-matters/

I recognize that CEO Hawk takes a similar view of LLM's in the sense that there's a concern if LLM use is rampant, then a community cannot differentiate between authentic engagement vs the concern I raised of astroturfing. I agree that this is a threat to proper discussion, and the Fediverse must answer this threat. I do agree that a "trust system" does provide a lot of answers, and in some ways PieFed has already introduced some of these trust based features.