Just let anyone scrape it all for any reason. It’s science. Let it be free.

Piracy: ꜱᴀɪʟ ᴛʜᴇ ʜɪɢʜ ꜱᴇᴀꜱ

⚓ Dedicated to the discussion of digital piracy, including ethical problems and legal advancements.

Rules • Full Version

1. Posts must be related to the discussion of digital piracy

2. Don't request invites, trade, sell, or self-promote

3. Don't request or link to specific pirated titles, including DMs

4. Don't submit low-quality posts, be entitled, or harass others

Loot, Pillage, & Plunder

📜 c/Piracy Wiki (Community Edition):

🏴☠️ Other communities

FUCK ADOBE!

Torrenting/P2P:

- !seedboxes@lemmy.dbzer0.com

- !trackers@lemmy.dbzer0.com

- !qbittorrent@lemmy.dbzer0.com

- !libretorrent@lemmy.dbzer0.com

- !soulseek@lemmy.dbzer0.com

Gaming:

- !steamdeckpirates@lemmy.dbzer0.com

- !newyuzupiracy@lemmy.dbzer0.com

- !switchpirates@lemmy.dbzer0.com

- !3dspiracy@lemmy.dbzer0.com

- !retropirates@lemmy.dbzer0.com

💰 Please help cover server costs.

|

|

|---|---|

| Ko-fi | Liberapay |

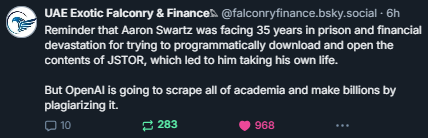

The OP tweet seems to be leaning pretty hard on the "AI bad" sentiment. If LLMs make academic knowledge more accessible to people that's a good thing for the same reason what Aaron Swartz was doing was a good thing.

On the whole, maybe LLMs do make these subjects more accessible in a way that's a net-positive, but there are a lot of monied interests that make positive, transparent design choices unlikely. The companies that create and tweak these generalized models want to make a return in the long run. Consequently, they have deliberately made their products speak in authoritative, neutral tones to make them seem more correct, unbiased and trustworthy to people.

The problem is that LLMs 'hallucinate' details as an unavoidable consequence of their design. People can tell untruths as well, but if a person lies or misspeaks about a scientific study, they can be called out on it. An LLM cannot be held accountable in the same way, as it's essentially a complex statistical prediction algorithm. Non-savvy users can easily be fed misinfo straight from the tap, and bad actors can easily generate correct-sounding misinformation to deliberately try and sway others.

ChatGPT completely fabricating authors, titles, and even (fake) links to studies is a known problem. Far too often, unsuspecting users take its output at face value and believe it to be correct because it sounds correct. This is bad, and part of the issue is marketing these models as though they're intelligent. They're very good at generating plausible responses, but this should never be construed as them being good at generating correct ones.

Except it won’t. And AI we’ll be pay to play

That would be good if they did that but that is not the intent of the org, the purpose of the tool, the expected or even available outcome.

It's important to remember this data is not being scraped to make it available or presentable but to make a machine that echos human authography convincingly more convincingly.

On an extremely simplified level, it doesn't want to answer 1+1=? with "2", it wants to appear like a human confidently answering an arithmetic question, even if the exchange is "1+1=?" "yes, 2+3 does equal 9"

Obviously it can handle simple sums, this is an illustrative example

To paraphrase Nixon:

"When you're a company, it's not illegal."

To paraphrase Trump:

"When you're a company, they just let you do it."

RIP AARON

Yes.. but it was MIT that pushed the feds to prosecute.

Never forge to name the proper perp.

Disgusting. And we subsidize their existence 🤡

https://en.wikipedia.org/wiki/Carmen_Ortiz

Ortiz said "Stealing is stealing whether you use a computer command or a crowbar, and whether you take documents, data or dollars. It is equally harmful to the victim whether you sell what you have stolen or give it away."

MIT releases financials and endowment figures for 2024:

The Institute’s pooled investments returned 8.9 percent last year; endowment stands at $24.6 billion

Who writes the laws? There's your answer.

I'm curious why https://www.falconfinance.ae/ cares about this though.

The hell they are selling? https://www.falconfinance.ae/falcon-securities/

I did some digging. It's a parody finance website that makes it seem like you can invest in falcons and make a blockchain (flockchain) with them. Dig a little further, go to the linked forum, and you'll see it's just a community of people shitposting (mostly).

Remember what you learned in school: Working as a team to solve a test or problem is unacceptable!!! Unless you are a company town.

Rip Aaron

Anything the rich and powerful do retroactively becomes okay

and in due time, we'll hack OpenAI and get the sources from the chat module..

I've seen a few glitches before that made ChatGPT just drop entire articles in varying languages.

AI models don't actually contain the text they were trained on, except in very rare circumstances when they've been overfit on a particular text (this is considered an error in training and much work has been put into coming up with ways to prevent it. It usually happens when a great many identical copies of the same data appears in the training set). An AI model is far too small for it, there's no way that data can be compressed that much.

thanks! it actually makes much sense.

welp guess I was wrong. so back to .edu scraping!

I'm still blaming the MIT for that !

Is OpenAI profitable now?

Is OpenAI open still?

No and no.

Never really was

Wait, since when it had not been? Or are you telling me that vastly the fastest growing platform in history with multiple payment gates (subscriptions, pay per token, licensing etc.) was not profitable for some reason?

It's following the Amazon monopolization model.

Or are you telling me that vastly the fastest growing platform in history with multiple payment gates (subscriptions, pay per token, licensing etc.) was not profitable

Are you not aware that 99 times out of 100 if you see a tech company rapidly growing it's completely unprofitable and not even attempting to be profitable yet? It's called blitzscaling and is pretty clearly what openai is attempting. Like if you see a tech company quickly growing you should be assuming it's unprofitable until proven otherwise not the opposite lol.

Running those datacenters is extremely expensive.

The cost is to the whole world, because they consume enormous amounts of energy and produce essentially nothing. Like bitcoin miners.

Worse than Bitcoin miners, AI seems to have the wholethroated support of capital (rather than a single faction), who see it as the next big form of automation

Not sure if you are joking but... it does not appear to be making anywhere near the amount of money that has been invested in it.

It costs a stupendous amount of money to develop the models, to train them, to rent out or just buy the hardware needed to do this, to pay for the electrical power to do this.

No and AI almost never will be. However, investor money keeps coming, so it doesn't matter.