Yep that's a pretty good comparison!

I'm curious on what you mean by sourcing training data in an ethical way? I know OpenAI has come under well deserved scrutiny for apparently using content that is hidden behind paywalls without purchasing it themselves in their training data. Which is quite unethical, but aside from that instance I'm interested in hearing some other concerns for my own education.

In general there are definitely loads of models on places like Hugging Face that are fully open source and provide training data sources for many.

I believe for Microsoft's new Phi 3 models they actually generated synthetic data themselves for training as well which is an interesting approach that seems to yield good results.

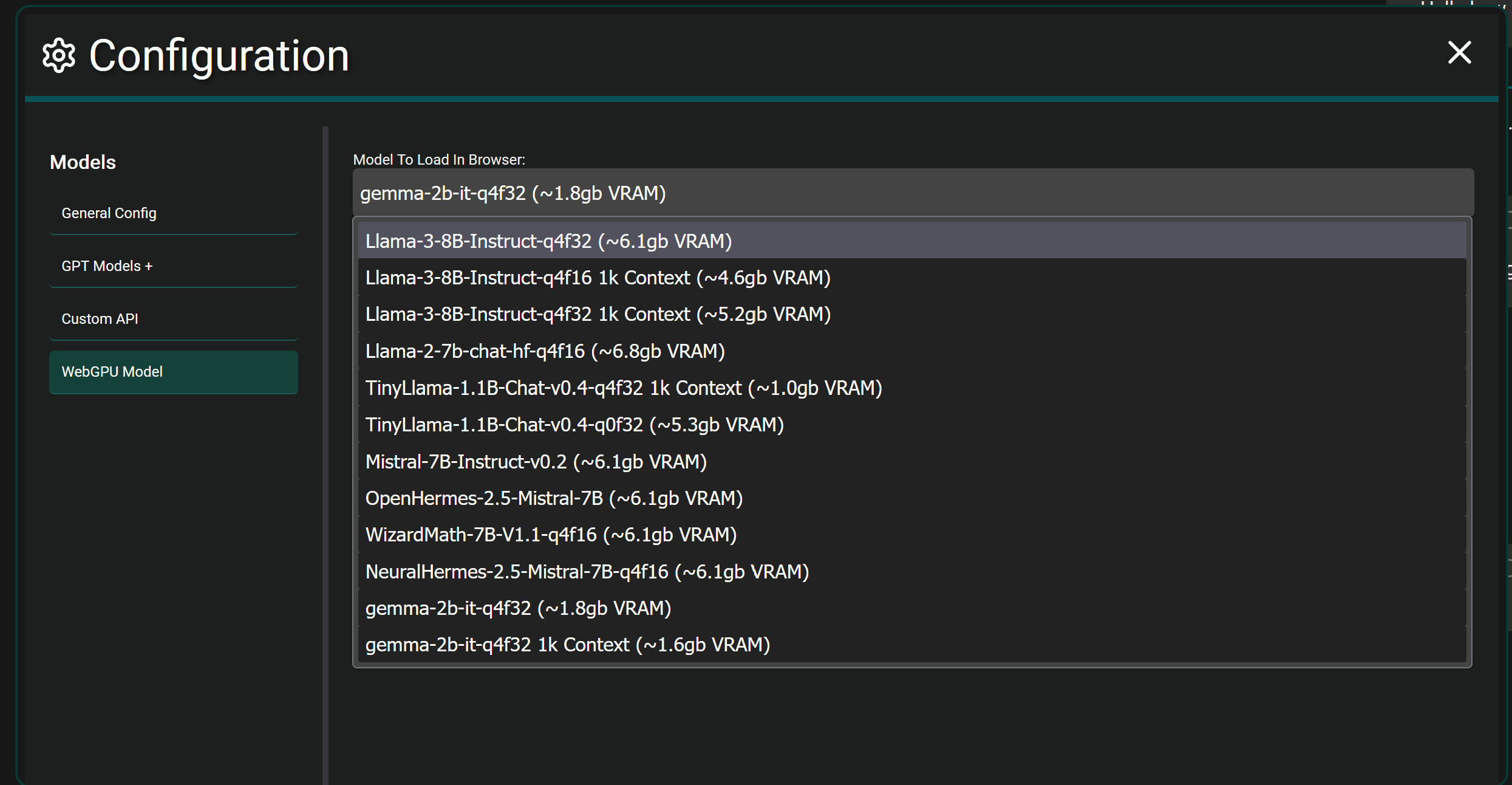

In the open source LLM world the new Meta Llama 3 models are the latest and greatest, I haven't seen any cause for concerns with it yet. Might be worth looking into those!

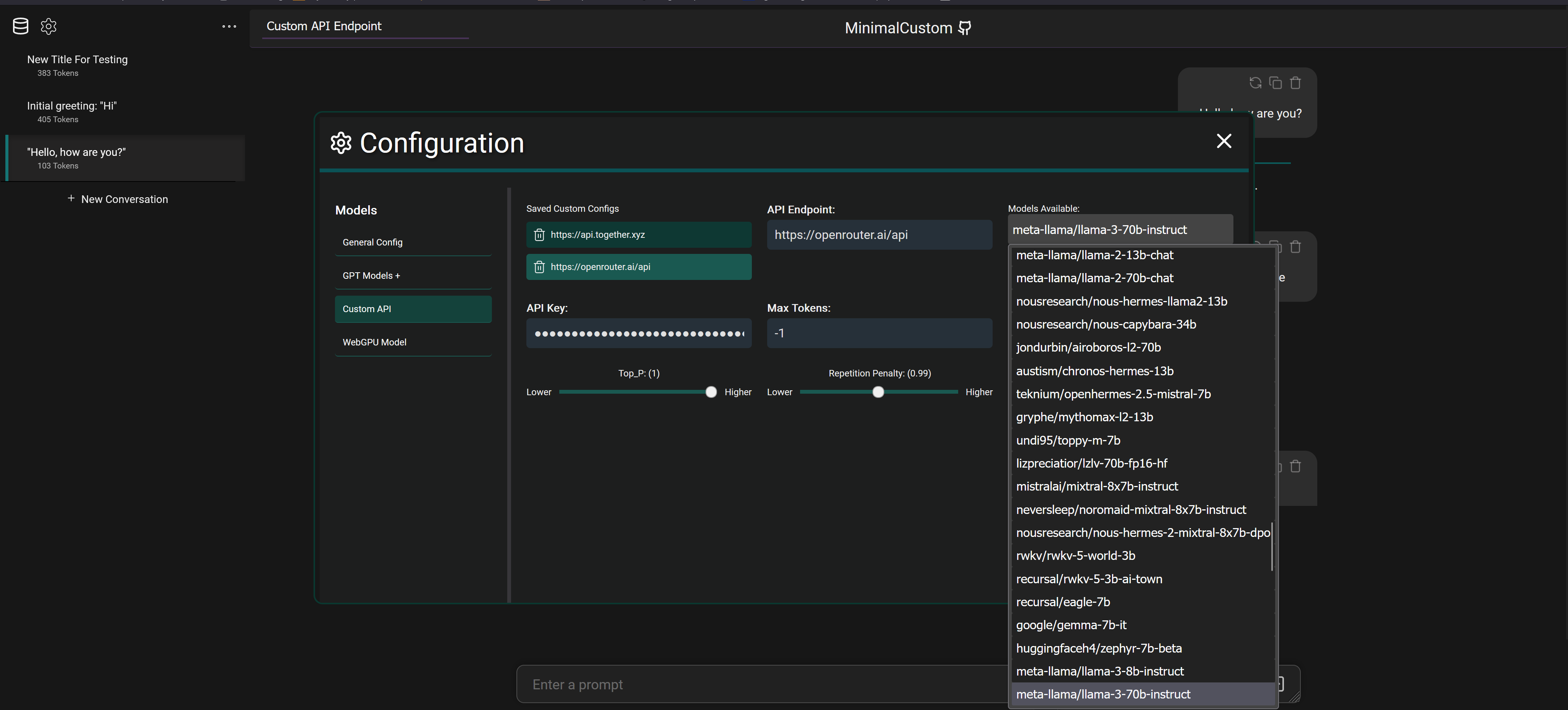

This project is entirely web based using Vue 3, it doesn't use langchain and I haven't looked into it before honestly but I do see they offer a JS library I could utilize. I'll definitely be looking into that!

As a result there is no LLM function calling currently and apps like LM Studio don't support function calling when hosting models locally from what I remember. It's definitely on my list to add the ability to retrieve outside data like searching the web and generating a response with the results etc..