That leads us to John Gabrield’s Greater Internet Fuckwad Theory

I don't have comments on the rest of your post, but I absolutely hate how that cartoon has been used by people to justify that they are otherwise "good" people who are simply assholes on the internet.

The rebuttal is this: This person, in real life, chose to go on the internet and be a "total fuckwad". It's not that adding anonymity changed something about them, they were the fuckwads to begin with, but with a much lower chance of having to be held accountable, they are free to express it.

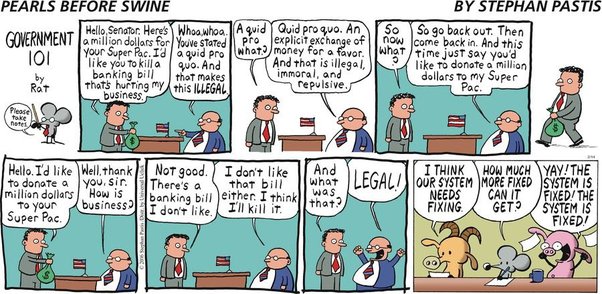

This is the result of capitalism - corporations (aka the rich selfish assholes running them) will always attempt to do horrible things to earn more money, so long as they can get away with it, and only perhaps pay relatively small fines. The people who did this face no jailtime, face no real consequences - this is what unregulated capitalism brings. Corporations should not have rights or protect the people who run them - the people who run them need to face prison and personal consequences. (edited for spelling and missing word)