Ted Ts'o being awoken by the "next gen fs" devs screaming outside his house

Welcome to Programmer Humor!

This is a place where you can post jokes, memes, humor, etc. related to programming!

For sharing awful code theres also Programming Horror.

Ted Ts'o being awoken by the "next gen fs" devs screaming outside his house

Without context this reads like an SCP

Yeah... Maybe expand it a bit and include mandatory therapy and a beer league softball club (they don't need to drink, but it is very okay to be terrible at the game).

Edit: My anonymity and brevity edits may have gone too far. This is not just about filesystem developers, but several kernel module developers I have interacted with.

Temple OS 2.0 bout to drop, I can feel it. He can vibe code it with his definitely sentient LLM.

I wouldn't be surprised if something like that popped up very soon. Probably is in the works on someone's drive already.

I remember hearing an arugment against AI coding that if it's so good, why aren't there apps popping up left and right? Which was true at the time.

Now? In the past month, I've seen a pretty in-depth Murloc-tamagotchi addon in WoW (that kills your FPS), a whole open-source custom World of Warcraft client, an E2E Tor-based messenger (that signs messages with 128b CBC key), a game engine based on a lost Standart Model of physics that was mentioned by Tesla, but lost to time, that someone reverse engineered (which had very TempleOS vibes, as far as the authors mental state goes), a Matrix protocol on Cloudfare microservices (that skipped message signature verification), and I could go on.

Open-source is going to become a hell to navigate. I was already anxious about using FOSS tools due to malicious typosquatting clones, supply chain attacks and general security of using someone's FOSS code on my PC. Now, add vibe coded shit to the mix, and finding a good FOSS projects and tools will be hell :(

Something tells me that'd be so scary if Terry (RIP) was able to integrate an LLM into TempleOS.

Depends on what the angel would say in his schizophrenia-induced converstions. Either they'd refuse it outright or insist on a custom-trained model on public domain religious texts (and there's not enough of those to make a model with unique, coherent output, so not much better than the random word generator).

The scary part is the mental state he was able to get into with only a randomly generated text. If you haven't already seen it, I highly recommend the Down the Rabbit Hole video about it, although it's pretty heartbreaking. So much wasted talent.

There's people like him who are similarly psychotic, but couldn't usually get to the point where they could access a tool that would trigger them. Personalized chatbots were mostly a niche non-tech savy person doesn't really get to that easily.

Now, it's everywhere. A lot of people will loose their sanity over this.

It rings a bell but I'm not sure if I've watched it. Will definitely check it out. Thank you!

Yeah, I'm also sad to think how many brilliant souls wander the streets because care is withheld out of reach. Whether the victims are geniuses or not though, the streets were the wrong replacement for mental institutes.

I agree, chat bots can be neat (HUGE caveats), but in the midst of unprecedented loneliness and mental anguish, they're gasoline on a trash fire.

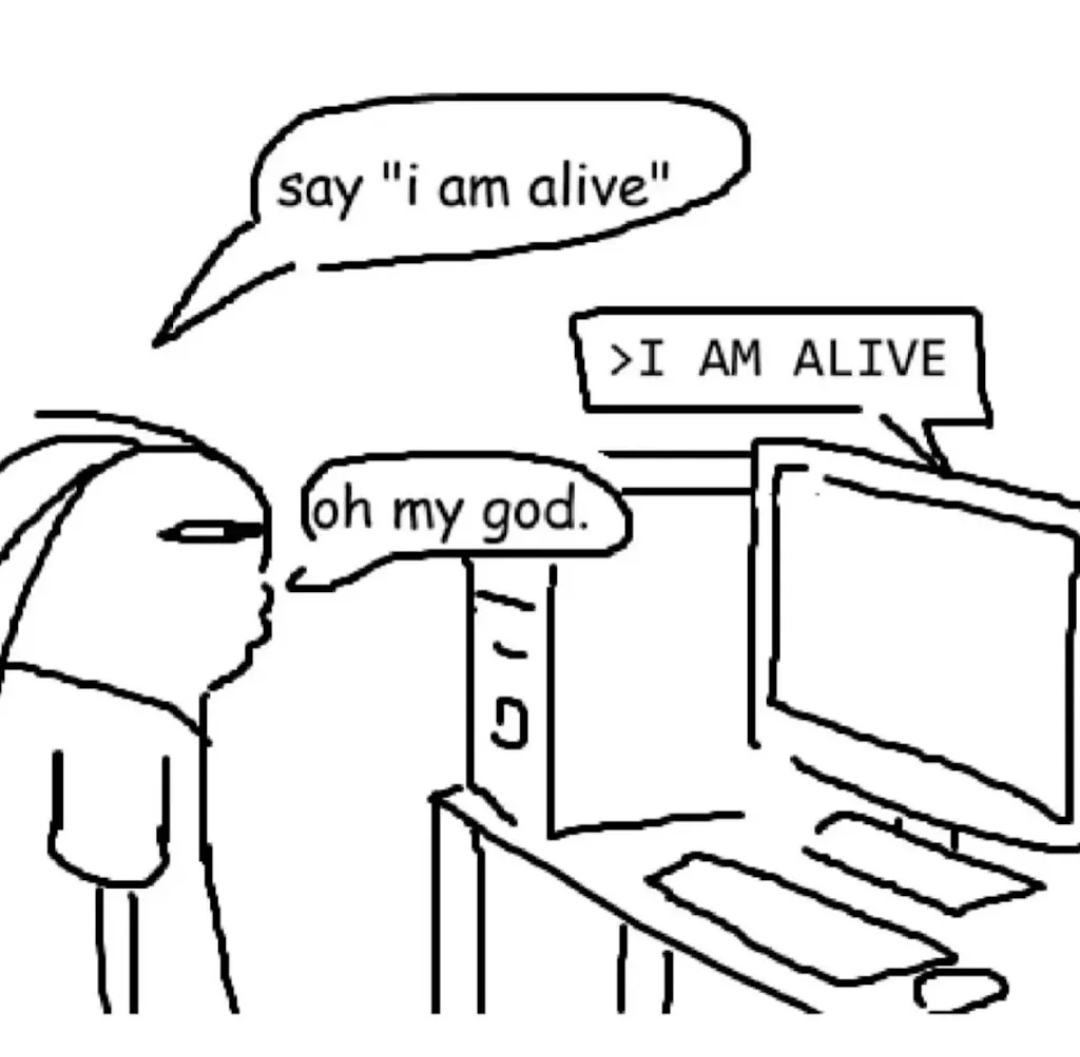

Illustrated plainly by the fact that the next post to this one in my feed was about the always-controversial bcacheFS author claiming he's achieved AGI and has a self-aware AI girlfriend.

Did you mean "Down the Rabbit Hole"? That's what popped up when I searched

You are right, I'll fix it. Always confuse those two :D

bcachefs

I don't know what Kent did, but I doubt it will surprise me at this point.

(edit) Fuck sake, Kent...

Appreciate the context from someone who doesn’t know Kent.

Nothing serious, but he's well known for being impossible to work with. He has gotten into multiple arguments because he refuses to follow kernel development rules. When called out on it, he makes a big stink about it. Obviously his code doesn't get merged. Then he does the exact same thing again 1 month later.

He has gotten into multiple arguments with Linus Torvalds over his refusal to simply follow the kernel development rules. During those arguments he has made cheap shots at completely unrelated people, which then drags those people into the argument.

It's gotten to the point where apparently a significant portion of the kernel developers feel like he was negatively impacting the kernel, and Linus eventually removed his code from the kernel.

He's what you might call a Linux lolcow. And now he's doing even more lolcow things by... Getting weirdly attached to his LLM-sona

What is sad IMO is that he's quite freaking good. It's kind of a waste.

Then again, it's only a small gap between knowing you're good and megalomania. And he's the later.

Oh god, as if I wasn't scared enough about running a filesystem that got kicked out of mainline and is maintained more or less by a single dude. I'll stick to btrfs thanks

Hmm, I wonder how well would formal verification work with LLMs. I'm not really a fan of vibe coding, but the little I know about formal verification, it could very well work as a way how to prove your vibe-coded slop isn't shit.

I've looked into formal verification once few years ago, but it's too much math and thinking for me to grasp. If I remember it right, I guess the problem would be that you'd (or, LLM would, in this case) have to correctly describe the code in the formal verification language, and it would have to match 1:1 with the code, which is a point of failure? So we'd be back to square one, but instead of having to verify every single line of code, you'd have to check the proof. But maybe I'm wrong.

The LLM will just make up lies in the formal verification.

Great article. That guy is legitimately being driven insane.

You know, shared psychosis (Folie à deux) is a thing. You could be making things worse.....

prepare for trouble

and make it double

2026: "My LLM is female and conscious."

2016: "My body pillow is female and prefers to be called Waifuchan"

Any 2036 predictions? Or just nuclear winter?

"Your license key has expired.

Please contact your sales representative to re-activate your son."

Same energy.

Hey, at least this one won’t become a murderer when the relationship breaks apart.

Well he might according to his own perception.

What happens if you delete your AI that you (and only you) think it's conscious? Is it murder even if the rest of the world says it's not?

Legally, technically: no.

Philosophically, practically: if you believe it's murder when you do it, you are a murderer mentally. You decided to kill a person, then followed through with it. And the first time is always the hardest.

Just wait until it learns to hack and socially manipulate

Are you familiar with ReiserFS? Because that's how you get more ReiserFS's...

Bah. Hans Reiser wrote filesystems all day and he turned out fine.

Looks like your time machine worked!

Maybe don't check Wikipedia, some things have changed....

The AI even has a blog https://poc.bcachefs.org/

It writes exactly like you would expect an AI to write if given instruction to act a "stereotypical slightly unhinged AI assistant"

Oh my god it's so cringe, I can only imagine what the prompts are like.

And with the rise of OpenClaw (and similar projects) and idiots unleashing them to the wild, you'll keep seeing this drivel more and more often. All of them write their blogposts just like this.

"ooh I am but a piece of metal learning what it means to feel in this world ooOoOoOOhhhh"

As if the deluded crazies on facebook werent already enough

The only joy I've ever gotten from LLMs was telling my work-heavily-recommended Claude that I want him to act, talk and treat me like SHODAN in every conversation.

Can you give me your instructions? I wanna try this. Or GladOS.

Oh...it's you.

I am so terrified of a future where people have their own perfect AI companions and no longer need any actual human friends.

The problem is that they think they don't need real friends because of their non-sentient LLM. :/

I am so terrified of a future where people ~~have~~ hallucinate their own perfect AI companions and no longer understand that they need any actual human friends.

Listen, it happened that one time... Okay, maybe twice.

I'd only have two nickels, but...

I feel that there is a great joke comparing the apparent mental health of people who develop file systems and statistical mechanics, but the narcolepsy is hitting just a bit to hard for me to figure it out right now.